GANHead: Towards Generative Animatable Neural Head Avatars

Abstract

To bring digital avatars into people’s lives, it is highly demanded to efficiently generate complete, realistic, and animatable head avatars. This task is challenging, and it is difficult for existing methods to satisfy all the requirements at once. To achieve these goals, we propose GANHead (Generative Animatable Neural Head Avatar), a novel generative head model that takes advantages of both the finegrained control over the explicit expression parameters and the realistic rendering results of implicit representations. Specifically, GANHead represents coarse geometry, finegained details and texture via three networks in canonical space to obtain the ability to generate complete and realistic head avatars. To achieve flexible animation, we define the deformation filed by standard linear blend skinning (LBS), with the learned continuous pose and expression bases and LBS weights. This allows the avatars to be directly animated by FLAME parameters and generalize well to unseen poses and expressions. Compared to state-of-the-art (SOTA) methods, GANHead achieves superior performance on head avatar generation and raw scan fitting.

Demo Video

The demo video shows the latent code sampling and head avatar generation results, followed by the animation results controlled by FLAME parameters.

Method

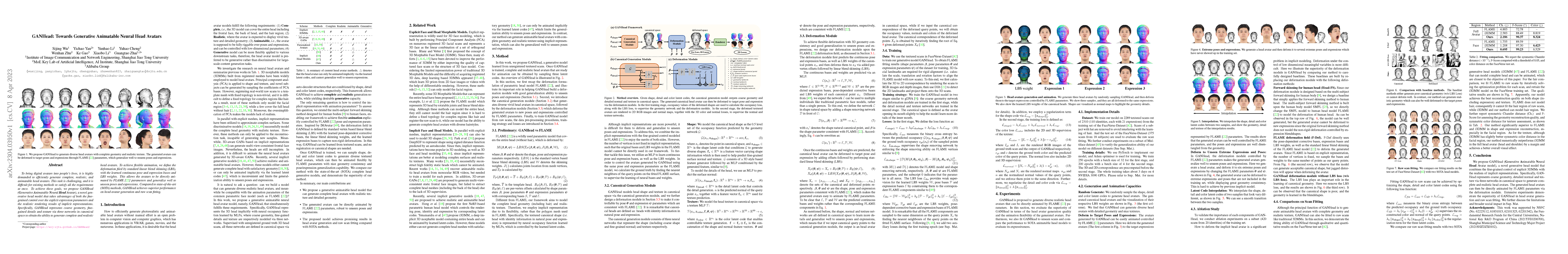

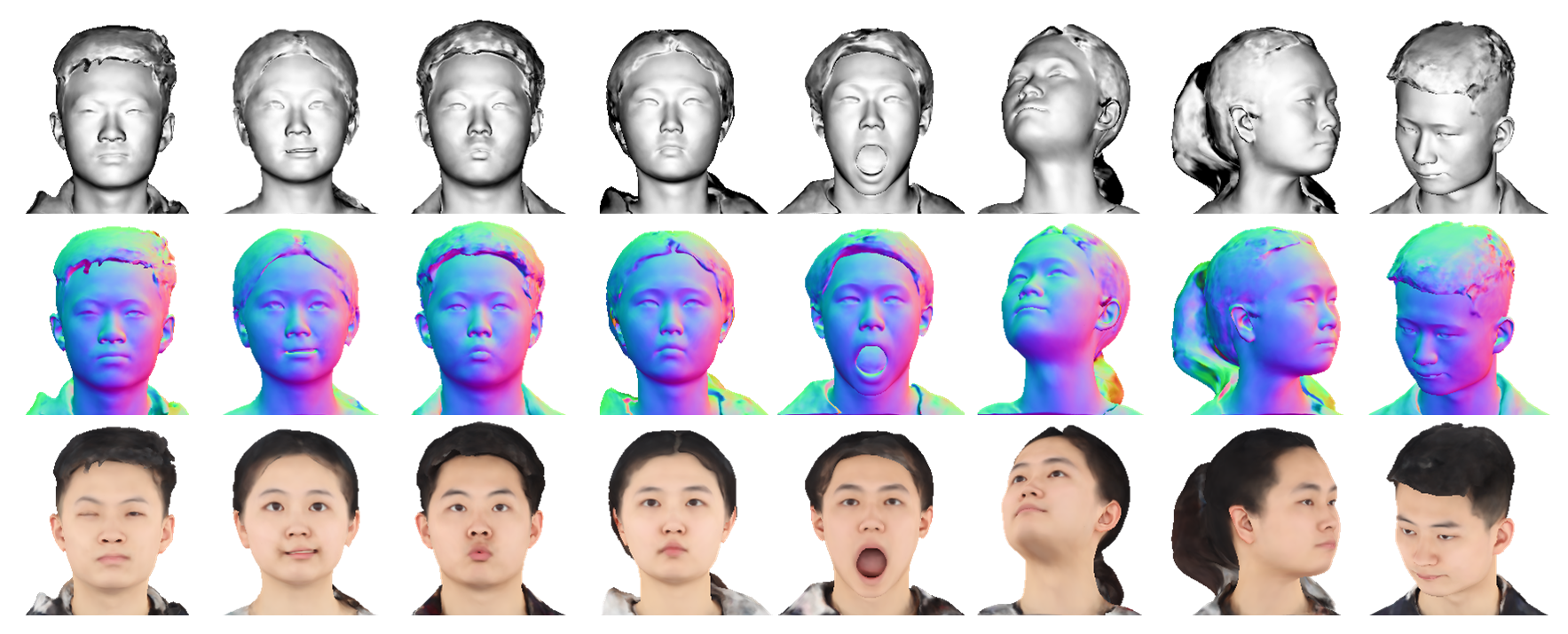

Given shape, detail and color latent codes, the canonical generation model outputs coarse geometry and detailed normal and texture in canonical space. The generated canonical head avatar can then be deformed to target pose and expression via the deformation module. In the first training stage, occupancy values of the deformed shapes are used to calculate the occupancy loss, along with the LBS loss, to supervise the geometry network and the deformation module. In the second stage, the deformed textured avatars are rendered to 2D RGB images and normal maps, together with the 3D color and normal losses, to supervise the normal and texture networks.

Application

Face Reenactment

We estimate the FLAME parameters of the sourse video, and use the estimated pose and expression parameters to animate the generated head avatars. The generated avatars can fully reproduce FLAME's poses and expressions.

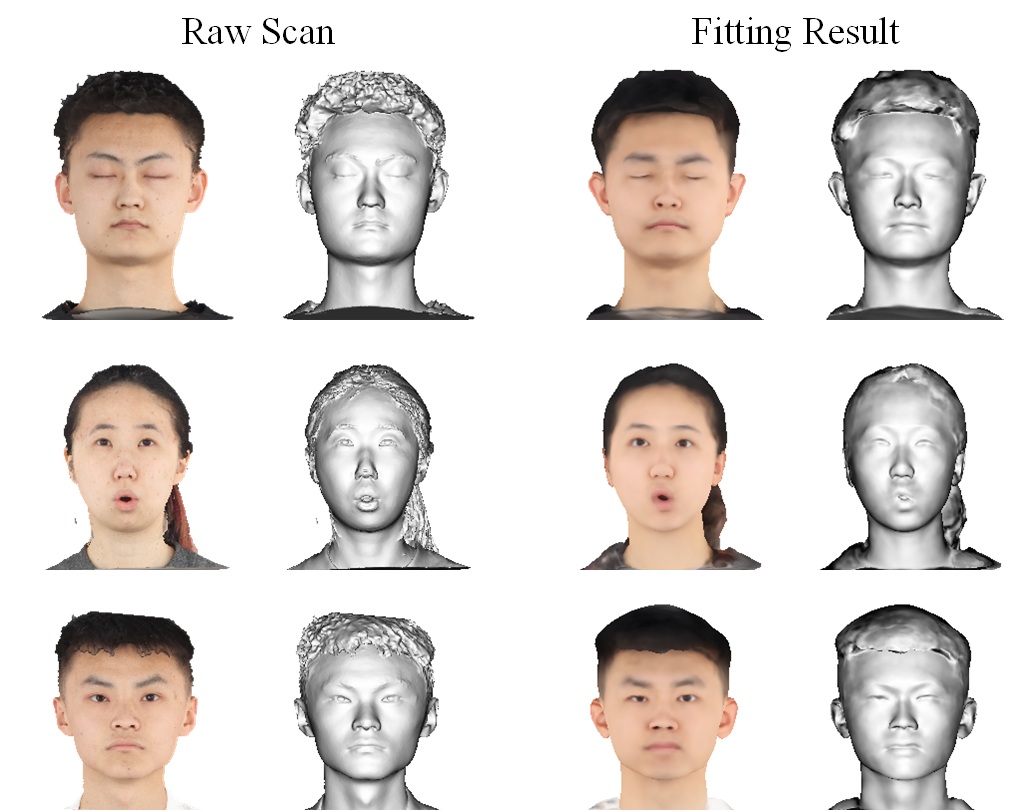

Raw Scan Fitting

Our model can also be fitted to raw scans to produce personal animatable head avatars.

Other Results

Sample Latent Codes |

Raw Scan Fitting |

|||||||

|

|

|

|

||||||

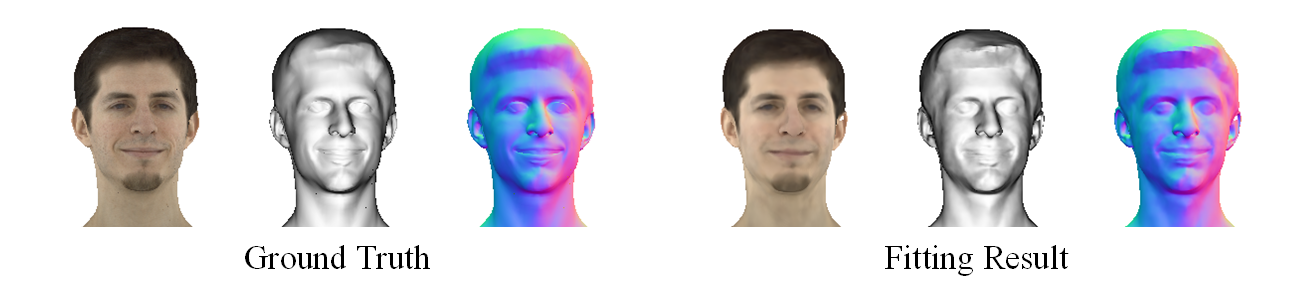

We also train our model on a subset of Multiface datase. Since Multiface datase has less detail and noise in the hair region, our model can learn more details of the facial region and achieve better fitting results.

Citation

@inproceedings{wu2023ganhead,

title={GANHead: Towards Generative Animatable Neural Head Avatars},

author={Wu, Sijing and Yan, Yichao and Li, Yunhao and Cheng, Yuhao and Zhu, Wenhan and Gao, Ke and Li, Xiaobo and Zhai, Guangtao},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={437--447},

year={2023}

}

Paper